Frequency of derivation engine476

Pages:

1

|

ctmorrison private msg quote post Address this user | |

| I'm wondering how often the derivation engine runs. I'm seeing derived streams taking 5 minutes or so get up to date. Although the eventual derived values are properly time-stamped, I'm concerned the delay in derivation might lead users to mis-interpret the dashboards they're seeing. | ||

| Post 1 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

| It's averaging a run every 3.6 minutes. The runtime can change as we add servers and the number of streams increases. | ||

| Post 2 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

| Derivation runtime has increased a lot since Spring due to added enhancements and the number of streams in the System. The current runtime is unacceptable. We'll take a hard look at it sometime this month and attempt to get it back down. Thanks for the feedback! |

||

| Post 3 • IP flag post | ||

|

ctmorrison private msg quote post Address this user | |

| Great news and I hope you're successful in bringing the times back down. If not, please let me (us) know so I can reconsider how we push data. I'm currently pushing it in a semi-compressed format, but may need to revert to a more de-compressed format that does not rely upon derivations. Thanks! | ||

| Post 4 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

| Derivation is suffering from components waiting to be unlocked. Components with a lot of derived streams combined with feed streams suffer the most. We're working on a better locking solution to improve the runtime. |

||

| Post 5 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

| We're tying some different solutions. You might see some spikes in derivation runtime (up or down) over the next few days. We started last night. | ||

| Post 6 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

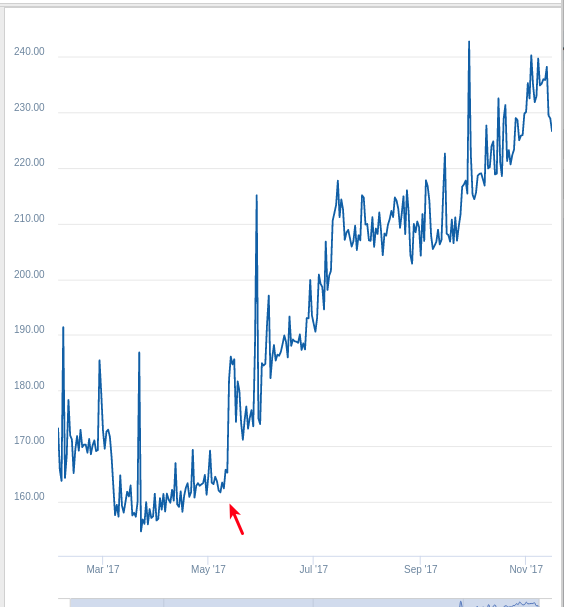

Here's a graph of derivation runtime since Spring. As you can see the trajectory is not good.  |

||

| Post 7 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

| One of the causes of long derivation runtimes was the waiting time for a derived stream to be unlocked. Locking occurs at the component level so if another stream under the component is locked, the derived stream has to wait. The lock can be caused by other other streams under the component being derived by another server/process (derivation is a parallel process that occurs in multiple processes across multiple servers). Component locking can also occur if another non-derived stream is accepting a feed (new or changing samples). Components with a lot of feed streams and derived streams are suffering the most from this issue. Moving streams to other components will not make derivation runtimes faster for an individual organization. If everyone did it, it would help, but we don't expect anyone to do that. We need to better handle that scenario. For now, we decreased the lock timeout on derived streams from 60 to 5 seconds. You may be seeing derivation job errors reporting lock timeouts. We are still not satisfied with the current runtime and this temporary solution. We are exploring other causes and better solutions. |

||

| Post 8 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

| Here's the job error you will see if your streams are hitting the 5 second lock timeout: 5 second timeout while waiting for a resource to unlock. Lock Holder's Stack: com.-gs-.-lab-.storage.StoreHelper.lockRow(StoreHelper.java:962) com.-gs-.-lab-.storage.ustream.UComponentStore.lock(UComponentStore.java:2084) com.-gs-.-lab-.jobs.sysJobs.DerivedStreamsJob2$ProcessStreamDerivationMapper.map(DerivedStreamsJob2.java:130) com.-gs-.-lab-.jobs.sysJobs.DerivedStreamsJob2$ProcessStreamDerivationMapper.map(DerivedStreamsJob2.java:1) ... |

||

| Post 9 • IP flag post | ||

|

MikeMills private msg quote post Address this user | |

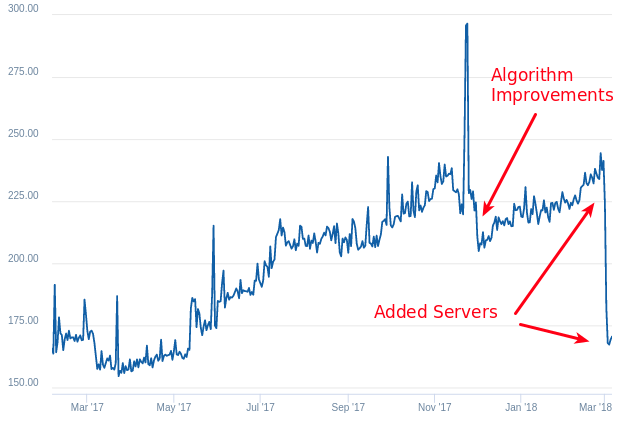

Here's a graph of daily average derivation runtimes. Code improvements and adding more servers sped things up. |

||

| Post 10 • IP flag post | ||

Pages:

1